Introduction to Strategy

Strategy is a cohesive response designed to address significant challenges. Effective strategy entails addressing identified high-stakes challenges through a coherent mix of policy and action. It starts with a precise diagnosis of the biggest challenge faced, is followed by a guiding policy that outlines solutions, and culminates in coherent actions aimed at overcoming the challenge. So, if this defines a good strategy, one might wonder, what does a bad strategy look like?

Bad Strategy

Richard’s Good Strategy/Bad Strategy book is perhaps better known for its examples of what not to do in strategy than for its good strategic insights. Many readers find these examples strikingly relevant to their own organizational struggles. Below is a list of common strategic missteps highlighted in his work:

Failure to Face the Challenge: Strategies that fail to clearly identify or address the core challenges facing the organization often lead to vague objectives without actionable plans.

Ignoring Obstacles: Strategies that overlook significant barriers or challenges that could derail the plan often lead to unrealistic expectations and strategic failures.

Mistaking Goals for Strategy: Setting high-level goals, such as profit targets or market share increases, without outlining specific actions or policies to achieve these goals, confuses outcomes with strategic plans.

Fluff: Using jargon or buzzwords might sound impressive but often lacks clear, practical implications or actionable content. This fluff tends to obscure the absence of substantive strategy. An extension of this is the misuse of vision and mission statements as substitutes for actual strategic planning, where they are presented as strategy without underpinning actions.

Template-Style Strategy: Adopting generic strategies not tailored to the company’s specific circumstances can lead to misaligned efforts and wasted resources.

No Coherent Action Plan: Strategies that list desired outcomes without linking them to coherent, coordinated actions that can realistically achieve these outcomes.

The Laundry List: Crafting a strategy composed of a long list of unrelated tasks or initiatives, which lacks focus and dilutes resources, thereby making it difficult to achieve significant results in any area.

Neglecting Organizational Dynamics: Crafting strategies that are incompatible with the company's culture or poorly communicated, leading to resistance and misalignment.

Misjudging Resources and Competition: Planning based on unrealistic assumptions about resource availability and underestimating the strength and strategies of competitors.

Short-termism: Focusing solely on short-term gains at the expense of long-term sustainability.

Effective strategy requires a clear understanding of the competitive landscape, internal capabilities, and adaptable objectives that can respond to changing circumstances. So what is a good strategy?

Components of a Good Strategy

Diagnosis. Guiding policy. And coherent actions.

Diagnosis involves understanding the nature of the challenge that needs to be addressed. It's about pinpointing the key issue and deciding what aspects of reality to focus on to develop a strategic response. For example, a company may identify that its primary challenge is declining market share due to intensified competition from tech-savvy newcomers.

The guiding policy is the approach chosen to navigate the challenge identified during the diagnosis. It dictates how the challenge will be managed and establishes a framework for subsequent actions. A firm might decide to differentiate its product line through advanced technology and superior customer service as a policy to regain market share.

Coherent actions are the specific steps taken to implement the guiding policy and directly address the challenge identified in the diagnosis. Actions must be well-coordinated and logically consistent to be effective. An example would be developing a new series of tech-enhanced products, training customer service teams to provide exceptional service, and launching a targeted marketing campaign to highlight the unique features of these products.

The Magnifying Glass Approach

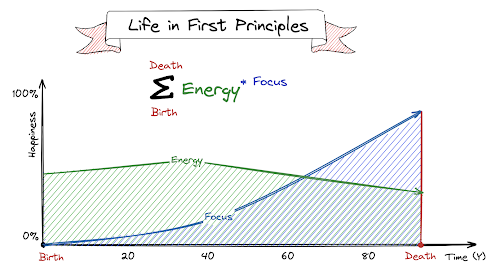

This analogy effectively illustrates the essence of strategy, especially in early startups. Imagine you are trying to light up fire using a magnifying glass. This is similar to bootstrapping a startup with initial customers.

The Magnifying Glass Analogy for Strategy

Power (The Sun):

In strategy, the source of power refers to the organization's unique strengths or advantages that can be leveraged to address the challenge. This might be a technological edge, a strong brand reputation, or exclusive access to certain resources.

Focus (The Magnifying Glass):

The magnifying glass symbolizes the guiding policy within a strategy. Just as a magnifying glass focuses sunlight into a concentrated beam, the guiding policy focuses organizational resources and efforts towards a specific path. This focus ensures that all actions are aligned and aimed precisely at the challenge.

Target (The Black Thread Burned):

The target in strategy is the challenge or problem identified in the diagnosis. It's the specific issue that the strategy aims to resolve or the opportunity it seeks to exploit. Just as the focused sunlight can ignite a black string faster than a huge wooden log, a well-crafted strategy directs organizational efforts to change or influence the target area effectively.

The magnifying glass analogy powerfully illustrates the critical alignment necessary in planning, emphasizing the need to synchronize power, focus, and target to effectively drive desired changes or outcomes. This analogy is particularly resonant in the context of a startup, where resources (or "power") are often limited. In such environments, directing a team's efforts towards highly specific and impactful activities can significantly amplify the effect of these limited resources, much like focusing sunlight through a magnifying glass to burn a thread rather than attempting to set ablaze a large wooden log. Similarly, in a startup, identifying and engaging the right initial user base and concentrating team efforts effectively can accelerate early growth and set the foundation for future success.

Distinguishing Goals from Strategy

Goals such as key performance indicators (KPIs), financial targets, or personal ambitions are not strategies because they describe desired outcomes rather than defining the specific means of achieving them. In the interview, Richard provides a personal anecdote to illustrate the difference between ambitions and strategy. He mentions a list of ambitions he had when he was younger, which included desires like wanting to become a top business school professor, drive a Morgan Drophead Plus 4, learn to fly an aircraft, and marry a beautiful woman, among other things. This example highlights that while these are all valid personal ambitions, they do not constitute a strategy because they lack a clear plan for achievement that addresses specific challenges or opportunities.

Here’s why goals alone do not constitute a strategy:

Goals do not provide a diagnosis of the underlying issues or challenges that need to be addressed. A strategy begins with a thorough understanding of the problem, which helps in crafting a focused and effective response. Without this, goals can be misaligned with the actual needs or challenges of the organization.

Goals often state what an organization hopes to achieve (e.g., "increase revenue by 20%" or "expand market share") but do not specify the actions required to reach these outcomes. Without a clear plan detailing how these objectives will be achieved, goals remain aspirational and directionless.A strategy not only outlines what needs to be achieved but also prioritizes and sequences specific, coherent actions that are required to meet the goals within the context of the guiding policy.

A guiding policy provides a coherent approach to overcoming the identified challenges, acting as a bridge between the diagnosis and the actions. Goals without a guiding policy lack a strategic framework to direct decision-making and resource allocation.

Goals do not usually consider the constraints or limitations within which an organization operates. Strategies are developed by taking into account both the opportunities and the constraints, ensuring that the plans are realistic and executable.

While goals are necessary for setting direction and measuring performance, strategy is about how to get there.

Identifying and Addressing Key Problems

An effective strategy focuses on problems that are significant to the organization's success and within its capacity to address, rather than focusing on obvious-looking goals. Here what that is composed of.

Important Problems: These are challenges that have significant impact on the organization's performance or strategic direction. They are critical in that solving them would lead to substantial improvements or advantages for the organization. Importance is determined by the potential impact on the organization's objectives, such as improving market position, enhancing operational efficiency, or driving innovation.

Addressable Problems: These are challenges that the organization has the capabilities to solve or at least make significant progress on. Addressability involves having or being able to develop the necessary resources, technologies, and competencies to tackle the problem effectively. Unlike setting goals, which often describe desired end states (like "increase market share by 10%"), focusing on important and addressable problems involves a dynamic process of understanding and acting on core challenges. It's about diagnosing and then strategically responding to these challenges with coherent actions that directly tackle the issues at hand. This approach ensures that strategy is not just about hitting targets or milestones but is fundamentally about changing the conditions or dynamics that limit the organization's success.

Strategy is not about listing everything you want to accomplish; rather, it's about identifying the most pressing challenges that are blocking the path to these ambitions, and then formulating a coherent plan of action to overcome these obstacles. This requires diagnosing the real issues at hand, setting a guiding policy that addresses these issues, and then executing coherent actions that directly tackle the identified problems.

Final Thoughts

Good strategy is not easy, and bad strategy is prevalent. A well-formulated strategy is key for navigating challenges. It requires a clear understanding of the issues at hand, a well-defined guiding policy, and precise actions that are directly linked to overcoming these challenges. Just as the magnifying glass analogy teaches us to focus our power precisely on the target, so must our strategic efforts be sharply focused on the goal. To learn more about avoiding these common pitfalls and crafting effective strategies, explore Richard’s Good Strategy/Bad Strategy book.