I'm a big fan of Simon Wardley. I'm not able to follow everything he writes about, but even the old writings are very interesting and somehow it all makes sense in retrospective. If you haven't read anything from him, this video is an excellent start (or go back a few years to the longer talk). In this article I'll try to make sense about what is happening in Microservices world using Wardly's theory (and diagrams).

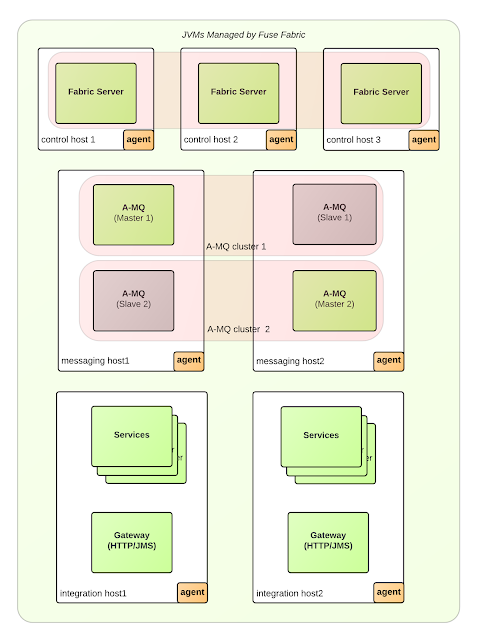

Containers being the primary means for deploying Microservices, soon created the need for container orchestration i.e. Cloud Native platforms. And today, the Cloud Native landscape is in transition, taking its next shape. If you look around there are multiple Cloud Native platforms, each of which started its journey from different point in time and a unique value proposition , but slowly getting into a common feature set, similar concepts and even standards. For example the feature parity of platforms such as AWS ECS, Kubernetes, Apache Mesos, Cloud Foundry, are getting close, each being feature rich, used in production, and comparable primitives. As you can see from the diagram above, now what becomes important as technology strategy is to bet on platforms with open standards, open source, large community and high chance of long term success. That means for example choosing a container runtime that is OCI compliant, choosing a tracing tool that is based on Open Tracing standard rather than custom implementation, supporting the industry standard logging and monitoring solutions, supported by companies that are good in commodity products.

How things evolve?

Any idea, product, system, etc., starts with its genesis, and if it is successful, it evolves, others copy it and create new custom solutions from it. If it is still successful, it diffuses further and others create new products which get improved, extended, and becomes widespread and available, “ubiquitous”, well understood and more of a commodity. This lifecycle can be observed in many successful products when looked over the years such as computers, mobiles, virtualization/cloud, etc.

If we think about the Microservices, the architectural style, the projects that support it, platforms that were born from it, containers, DevOps practice, etc... each of them is at a certain stage in the diagram above. But as a whole the Microservices movement now is a pretty widespread, well understood concept, and turning into commodity already. And there are many indications confirming that, starting from number of publications, conferences, books, confirmed success stories on production, etc. No doubt about it, not any longer.How did we get here?

The Microservices genesis started 5-6 years ago with Fred George and James Lewis from ThoughtWorks sharing their ideas. In the next few months Thoughtworks did lot of thinking, writing, and talking about it, while Netflix did a lot of hacking and created the first generation of Microservices libraries. Most of those libraries were still not very popular, and usable by the wider developer community, and only the pioneers and startup minded companies would try them at times. Then SpringSource joined the bandwagon, they wrapped and packaged the Netflix libraries into products and made all the custom build solutions accessible and easy to consume for Java developers. In the meantime, all this interest in Microservices drove further innovation and containers were born. That brought in another wave of innovation, more funding, shuffling, new set of tools, which made the DevOps theory a practise.Containers being the primary means for deploying Microservices, soon created the need for container orchestration i.e. Cloud Native platforms. And today, the Cloud Native landscape is in transition, taking its next shape. If you look around there are multiple Cloud Native platforms, each of which started its journey from different point in time and a unique value proposition , but slowly getting into a common feature set, similar concepts and even standards. For example the feature parity of platforms such as AWS ECS, Kubernetes, Apache Mesos, Cloud Foundry, are getting close, each being feature rich, used in production, and comparable primitives. As you can see from the diagram above, now what becomes important as technology strategy is to bet on platforms with open standards, open source, large community and high chance of long term success. That means for example choosing a container runtime that is OCI compliant, choosing a tracing tool that is based on Open Tracing standard rather than custom implementation, supporting the industry standard logging and monitoring solutions, supported by companies that are good in commodity products.

Organization Types

According to Wardly, there are three types of people/teams/organizations and each is good at certain stages of the evolutions:- Pioneers are good in exploring uncharted territories and undiscovered concepts. They turn into life the crazy ideas.

- Settlers are good in turning the half baked prototype into something useful for a larger audience. They build trust, understanding and refine the concept. They turn the prototype into a product, make it manufacturable and turn it profitable.

- Town Planners are good in taking something and industrialise it taking advantage of economies of scale. They build the trusted platforms of the future which requires immense skill. They find ways to make things faster, better, smaller, more efficient, more economic and good enough.

With this definition and the above table showing the characteristics of each type of organisation, we can make the following hypothetical classification:

- Netflix are definitely the Pioneers. The creative, path finder people they have, the way the company is set around experimentation, uncertainty, their culture around freedom, responsibility, everything they have brought into the microservice services world makes them pioneers.

- SpringSource for me are more the settler type. They already had a popular Java stack, and they managed to spot the trend in Microservices, and created a good consumable product in the form of Spring Boot and Spring Cloud.

- Amazon, Google, Microsoft are the town planners. They may come late, but they come well prepared, with the long term strategy defined, with web scale solutions and unbeatable pricing. Platforms such as Kubernetes, ECS (not entirely sure about the latter as it is pretty closed) are build on over 10 years of experience, and indented to last long, and become the undustry standard.

Conclusion

In the Microservices world things are moving from uncharted to industrialised direction. Most of the activities are not that chaotic, uncertain and unpredictable. It is almost turning into a boring and dull activity to plan, design and implement Microservices. And since it is an industrialised job with a low margin, the choice of tool, and ability to swap those platforms plays a significant role.

Last but not least, a nice side effect from this evolution is that we should hear less about Conway's Law, the two pizzas, and circuit breaker during conferences, and should hear more about managing Microservices at scale, automation, business value, serverless and new uncharted ideas from the pioneers in our industry.